Mathematics of Fundamental Formula of Gambling, Logarithms and "God"

★ ★ ★ ★ ★

By Ion Saliu, Founder of Gambling Mathematics, Founder of Probability Theory of Life

1. Logical Steps, Algorithm to the Fundamental Formula of Gambling

First captured by the WayBack Machine (web.archive.org) on May 10, year of grace 2000.

"Let no one enter here who is ignorant of mathematics."

"The most important questions of life are, for the most part, really only problems of probability."

(The frontispiece of Plato's Academy)

(Pierre Simon Marquis de Laplace, "Théorie Analytique des Probabilités")

Here is how I arrived to, by now, famous Fundamental Formula of Gambling (FFG). When laypersons say: "It is so simple," it always represents undeniable mathematics; therefore, undeniable Truth. I thought I had worked it out on my own, because the formula starts with the very essence of probability theory: p = n/N, or reduced to a p = 1/N mathematical relation. A truth becomes (almost) selfevident when a number of people think independently of the same thing. But this human law must be the most undeniable of them all: No Truth is selfevident AND no human thinks totally independently of others.

My first step got my feet wet in my pick 3 lottery software pond. "Probably win," that's what I had thought.

I rationalized in this manner. The probability of any 3-digit combination is 1/1000. Therefore, I had expected that the repeat (skip) median of a long series of pick-3 drawings would be 500. It would be similar to coin tossing, where the median of p=1/2 series is 1. In other words, the median of a long series of coin tosses is 1. To my surprise, the repeat median of long series of pick-3 drawings was not 500. It was closer to 700.

I checked it for series of 1000 real drawings and also randomly generated drawings. Then I checked the median against series of 10000 (10 thousand) drawings. The median of the skip was always close to 700. Do not confuse it for the median combination in the set. That value of the median is, in fact, either 499 or 500. The correct expression is 4,9,9 or 4 9 9 or 5,0,0 (three separate digits).

What is that median useful for, anyway? Among other properties, the skip median (or median skip) shows that, on the average, a pick-3 combination hits in a number of drawings. Any pick-3 combination hits within 692 drawings in at least 50% of the cases. Equivalently, if you play one pick-3 combination, there is a 50%+ chance it will hit within 692 drawings, or it will repeat no later than 692 drawings. The chance is also (almost) 50% that you will have to wait more than 692 drawings for your number to hit.

I studied theory of probability (gambling mathematics too!) in high school and in college. Some things get imprinted on our minds. Such information becomes part of our axioms. An axiom is a self-evident truth, a truth that does not necessitate demonstration. We operate with axioms in a manner of automatic thinking.

So, I was analyzing mathematically long pick-3 series, where p=1/1000. Next, I wrote the probability formula of a single pick-3 number to hit two consecutive drawings: p = 1/1000 x 1/1000 = 1/1000000 (1 in 1 million). I have never found useful to work with very, very small numbers in probability.

How about the reverse? The probability of a particular pick-3 number NOT to hit is p = (1 1/1000) = 999/1000 = 0.999. This is a very large number. It is almost certain that my pick-3 combination will not hit the very first time I play it.

How about not hitting two times in a row? P = (1 1/1000) 2 = 0.999 to the power of 2 = 0.998. Still, a very large number! I reversed the approach one more time: What is the opposite of not hitting a number of consecutive drawings? It is winning within a number of consecutive drawings.

The knowledge was inside my head. Unconsciously, I used Socrates' dialectical method of delivering the truth. (His mother delivered babies.) I also followed steps in De Moivre formula. At this point, I had this relation:

1 (1 p) N

where N represents the number of consecutive drawings.I thought that for N = 500 drawings, the expression above should give the median, or a probability of 50%. So, I calculated 1 (1 p) N = 1 (1 1/1000) 500 = 1 (0.999) 500 = 0.3936 = 39.36%. Thus, my relation became:

0.3936 = 1 (1 1/1000) 500

I made N = 692. I obtained the value:

Next step: I made N = 693. I obtained the degree of certainty:

1 (1 1/1000) 693 = 1 - 0.4999 = 50.01% (very, very close to 50%).

Thus, the parameter I call the FFG median is between 692 and 693 for the pick-3 lottery.

2. The Mathematical Solution: Divine Logarithms

I concluded I should not make more assumptions. What if I don't think I know what N should be for the median (50%), or for any other chance, which I simply called the degree of certainty? I realized I had the liberty to select whatever degree of certainty I wanted to, and only had to calculate N. The relationship became:

DC = 1 (1 p) N

Then:(1 p) N = 1 DC

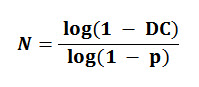

The equation can be solved using logarithms:

The only unknown is N: the number of consecutive drawings (or trials) that an event of probability p will appear at least once with the degree of certainty DC.

The rest is history. I called the relation The Fundamental Formula of Gambling almost automatically. Unintentionally, it might sound cocky. Just refer to it as FFG.

Nothing comes with absolute certainty, but to a degree of certainty! That's mathematics, and that's the only TRUTH.

3. Mathematics of the God Concept: The Formula of Absurdity

Unfortunately, O glorious sons and daughters of Logos and Axioma, the idea of God is a mathematical absurdity! It saddened me first, but we must come to grip with reality. The humans fictionalize because we feel we can't live without the comfort of absolute certainty.

* Some humans with mathematical skills will stumble upon an error, when the degree of certainty DC is set to 100%. There is no absolute certainty in the Universe (or probability equal to 1). It leads to an absurdity: Calculating the number of events necessary for an event of probability p to appear with a degree of certainty equal to 100%. It is absurd. No other qualifications apply, such as impossible or erroneous formula. Just remember the relation we had before considering the degree of certainty! We dealt with the probability of losing N consecutive times: (1 p) N. In this relation, no N can lead to zero (1 100%). Not even minus Infinity! A computer program should trap the error and ask the user to enter a DC less than 100% (things like 99.99999999 %).*

Some profound thoughts surrounding this mathematical expression and the false error.

The Fundamental Formula of Gambling (FFG) proves that absolute certainty is a mathematical absurdity. If we set the degree of certainty DC=1 (or 100%), FFG leads to a mathematical absurdity. God is Absolute Certainty, therefore absolute absurdity. I can only imagine de Moivre's reaction when this thought might have crossed his mind: "Certainty is absurd! How can God be True?" It was the 17th century, and the 21st has just a little changed for the better

Aporia is a special form of absurdity. A Sophist philosopher Zeno of Elea constructed a most famous aporia. It is known as the Paradox of Achilles and the tortoise. Read the first philosophical, logical, and mathematical solution: Zeno's Paradox: Achilles Can't Outrun the Tortoise?

It was very easy to apply FFG mathematics to many types of lottery and gambling games. It is at the foundation of several high probability gambling systems I designed: roulette, blackjack, horseracing, and lottery. It works with stocks, too. There is significant randomness in stock evolution. Many stockbrokers came to terms with the reality that all stocks fluctuate in an undeniably random fashion. I am surprised how many brokerage firms have visited my site!

4. Insider Information: It's All in Our Reason

In the year of grace 2001 my memory dug out a real gem. I wrote about it in a post on my message board: Cool stories of the Truth.

I found another treasure: A little book in Romanian. Don't they say great things come in small packages? It couldn't be truer than in this case. The book was The Certainties of Hazard by French academician Marcel Boll. The book was first published in French in 1941. My 100-page copy was the 1978 Romanian edition. It all came to life, like awakening from a dream. The book presented a table very similar to the table on my Fundamental Gambling Formula page. Then, in small print, the footnote: The reader who is familiar with logarithms will remark immediately that N is the result of the mathematics formula: N=log(1-pi)/log(1-p).

That's what I call the Fundamental Formula of Gambling, indeed! Actually, the author, Marcel Boll did not want to take credit for it. Abraham de Moivre largely developed the formula. Then I remembered more clearly about de Moivre and his formula from my school years. Abraham de Moivre himself probably did not want to take credit for the formula. As a matter of fact, the relation only deals with one element: the probability of N consecutive successes (or failures). Everybody knows, that's p N (p raised to the power of N). It's like an axiom, a self-evident truth. Accordingly, nobody can take credit for an axiom. I thought Pascal deserves the most credit for establishing p = n / N. From there, it's easy to establish p N. And give birth to so many more worthy numerical relations.

5. Mathematics of Ion Saliu's Paradox or Problem of N Trials

Another look at one of the steps leading to the Fundamental Formula of Gambling:

I noticed a mathematical limit. I saw clearly: lim((1 (1 / N)) N) is equal to 1 / e (e represents the base of the natural logarithm or approximately 2.71828182845904...). Therefore:

The limit of 1 (1 / e) is equal to approximately 0.632120558828558...

I tested for N = 100,000,000... N = 500,000,000 ... N = 1,000,000,000 (one billion) trials. The results ever so slightly decrease, approaching the limit but never surpassing the limit!

When N = 100,000,000, then DC = .632120560667764...

When N = 1,000,000,000, then DC = .63212055901829...

(Calculations performed by SuperFormula, function C = Degree of Certainty (DC), then option 1 = Degree of Certainty (DC), then option 2 = The program calculates p.)

If the probability is p = 1 / N and we repeat the event N times, the degree of certainty DC is 1 (1 / e), when N tends to infinity. I named this relation Ion Saliu's Paradox of N Trials.

You can see the mathematical proof right here, for the first time. I created a PDF file with nicely formatted equations:

How long is in the long run? Or, how big is the law of BIG numbers? Ion Saliu's paradox of N trials makes it easy and clear. Let's repeat the number of trials in M multiples of N; e.g. play one roulette number in two series of 38 numbers each. The formula becomes:

Therefore, the degree of certainty becomes:

If M tends to infinity, (1 / e)M tends to zero, therefore the degree of certainty tends to 1 (certainty, yes, but not in a philosophical sense!)

Actually, relatively low values of M make the degree of certainty very, very nearly 100%. For example, if M = 20, DC = 99.9999992%. If M = 50, the PCs of the day calculate DC = 100%. Of course, they can't approximate more than 18 decimal positions! Let's say we want to know how long it will take for all pick-3 lottery combinations to be drawn. The computers say that all 1000 pick-3 sets will come out within 50,000 drawings with a degree of certainty virtually equal to 100%.

Ion Saliu's Paradox of N Trials refers to randomly generating one element at a time from a set of N elements. There is a set of N distinct elements (e.g. lotto numbered-balls from 1 to 49). We randomly generate (or draw) 1 element at a time for a total of N drawings (number of trials). The result will be around 63% unique elements and around 37% duplicates (more precisely named repeats).

Let's look at the probability situation from a different angle. What is the probability to randomly generate N elements at a time and ALL N elements be unique?

Let's say we have 6 dice (since a die has 6 faces or elements); we throw all 6 dice at the same time (a perfectly random draw). What is the probability that all 6 faces will be unique (i.e. from 1 to 6 in any order)? Total possible cases is calculated by the Saliusian sets (or exponents): 66 (6 to the power of 6) or 46656. Total number of favorable cases is represented by permutations. The permutations are calculated by the factorial: 6! = 720. We calculate the probability of 6 unique point-faces on all 6 dice by dividing permutations to exponents: 720 / 46656 = 1 in 64.8.

We can generalize to N elements randomly drawn N at a time. The probability of all N elements be unique is equal to permutations over exponents. A precise formula reads:

I created another type of probability software that randomly generates unique numbers from N elements. I even offer the source code (totally free), plus the algorithms of random number generation (algorithm #2). For most situations, only the computer software can generate N random elements from a set of N distinct items. Also, only the software can generate Ion Saliu's sets (exponents) when N is larger than even 5. Caveat: today's computers are not capable of handling very large Saliusian sets!

I wrote also software to simulate Ion Saliu's Paradox of N Trials:

OccupancySaliuParadox, mathematical software also calculating the Classical Occupancy Problem.

Read more on my Web page: Theory of Probability: Introduction, Formulae, Software, Algorithms.

FORMULA calculates several mathematical, probability, and statistics functions: Binomial distribution; standard deviation; hypergeometric distribution; odds (probability) for lotto, lottery, and gambling; normal probability rule; sums and mean average; randomization (shuffle); etc.

Download Software: Science, Mathematics, Statistics, Lexicographic, Combinatorial: SuperFormula, FORMULA, OccupancySaliuParadox from the software downloads site - membership is necessary (a most reasonable fee).

I assembled all my mathematics, probability, statistics, combinatorics programs in a special software package named Scientia.

The following pages at this website offer more special mathematical solutions, functions and formulas, especially in combinatorics. There are algorithms and special software to calculate and generate permutations, exponents, combinations, both for numbers and words. Also, lexicographical order or index can be easily calculated for a large variety of sets.

Home | Search | New Writings | Ion Saliu | Odds, Generator | Contents | Forums | Sitemap

6. Software: The Divine Tool to Further Empower Reason

I wrote software to handle the Fundamental Formula of Gambling (FFG) and its reverse: Anti-FFG or the Degree of Certainty. There are situations when we want to calculate the Degree of Certainty that an event of probability p will appear at least once within a number of trials N. As a matter of fact, this method offers a more precise correlation between an integer number of trials and a degree of certainty DC expressed as a floating-point number. Furthermore, the program can determine the probability from a data series! The number of elements in the data series is known (N). Sorting the data series can determine the median: The degree of certainty DC equal to 50%!

The 16-bit software was superseded by SuperFormula. Super Formula also calculates the Binomial Distribution Formula (BDF), the Binomial Standard Deviation (BSD), Statistical Standard Deviation (BSD) and then some.

Read Ion Saliu's first book in print: Probability Theory, Live!

Read Ion Saliu's first book in print: Probability Theory, Live!

~ Discover profound philosophical implications of the Formula of TheEverything, including Mathematics, formulas, gambling, lottery, software, computer programming, logarithm function, the absurdity of God concept.

Resources in Theory of Probability, Mathematics, Statistics, Combinatorics, Software

See a comprehensive directory of the pages and materials on the subject of theory of probability, mathematics, statistics, combinatorics, plus software.

The Fundamental Formula of Gambling (FFG) may well be the Ultimate Formula of The Everything.